Article

In the incidents we’ve looked at here at Realz, the most important pattern is not simply that fake images are circulating. It is that they are being treated as evidence, signals, or proof at the exact moment people are under pressure to react.

This is only a small editorial sample: seven distinct reported incidents across five countries over a few days in late April 2026. It is not a complete picture of everything happening globally. But even in this limited set, the practical issue is clear. AI-generated and manipulated visuals are creating trust problems that spill into politics, public safety, local communities, and reputation.

That matters because the harm often does not begin with the image alone. It begins with the decision the image seems to justify.

A small sample, but a wide range of harms

The incidents reviewed here span several different contexts.

In India, police booked a man accused of using AI to create a false photo of himself with Vice President C.P. Radhakrishnan, allegedly to project influence after an official event in Rishikesh. In that case, the image appears to have been used as false proof of access and status.

In Utah, Moab police said an AI-generated image falsely showed an ICE agent at a high school. Authorities publicly debunked the image and warned that hoaxes causing public alarm can become criminal matters. Here, the issue was not prestige or political theater but public confusion around a sensitive law-enforcement presence.

In South Korea, police arrested a man accused of distributing an AI-generated image of an escaped wolf in Daejeon. According to reporting, the fake image was shared widely enough that city officials and major media outlets used it, and police said it delayed the animal’s recapture by nine days. In that case, a single fabricated visual appears to have affected operational response.

In New Zealand, police and NetSafe warned about an AI-generated image showing body bags at the scene of a triple homicide in Hastings. Reporting emphasized not only that the image was fake, but that fabricated tragedy imagery can distress already traumatized communities and blur the line between documentary reporting and invention.

Other incidents in this sample point to reputational and political harm. A Baltimore County councilmember denounced an altered image portraying him as antisemitic. In the Basque Country, an AI-generated image of nationalist leader Aitor Esteban posted during coalition tensions reportedly contributed to a canceled political meeting. And in the United States, reporting described President Donald Trump sharing an AI-generated image of himself with a rifle in the context of a geopolitical warning toward Iran.

These are different situations with different stakes. Some involve alleged fraud, some apparent hoaxes, some reputational attacks, and some politically charged visual messaging. But they all raise the same underlying question: what happens when a synthetic image is accepted, even briefly, as a meaningful representation of reality?

The image is rarely the whole story

One useful way to read this sample is to look beyond whether an image was AI-generated and ask what role it played.

In several cases, the image functioned as a credibility shortcut.

- The fake photo with India’s vice president appears to have been used to suggest insider access.

- The false ICE image implied official presence in a sensitive setting.

- The fake wolf sighting image appeared to offer documentary evidence during an active public-safety search.

- The Hastings crime-scene image simulated on-the-ground reporting during a moment of public grief and uncertainty.

That distinction matters. The problem is not only image falsification in the abstract. It is visual impersonation and visual evidence abuse: the use of an image to borrow the authority of a public official, a crisis scene, a law-enforcement event, or a real-world emergency.

In that sense, these incidents look less like isolated content problems and more like verification failures. The image becomes dangerous when people, institutions, or audiences treat it as enough to act on.

A narrow but visible pattern: synthetic images are touching real workflows

This sample is too small and too mixed to support sweeping claims about a global trend line. But it does support a narrower observation.

Across these reported cases, manipulated and synthetic visuals were not confined to obvious parody or harmless experimentation. They intersected with real workflows:

- police response

- emergency information sharing

- political negotiations

- public trust in officials

- local media circulation

- social proof and status signaling

That is an important shift in emphasis. When a fabricated image enters a live civic or institutional process, the cost is not limited to whether viewers are fooled. The cost can include wasted time, delayed response, reputational injury, confused audiences, and poorer decisions.

The South Korea case is the clearest example in this sample because reported operational impact was unusually concrete: police said the fake wolf image delayed recapture efforts and disrupted primary duties. The New Zealand and Moab cases point in the same direction, though with different kinds of harm: confusion, distress, and public alarm around sensitive events.

Political and reputational uses are especially hard to unwind

A second pattern in the incidents reviewed here is that politically or reputationally charged images can have outsized consequences even without long-term deception.

The Basque Country case shows how an AI-generated image can escalate already tense negotiations. The reported impact was not an election result or a mass disinformation campaign. It was something more immediate and more concrete: a deterioration in political relations serious enough to reportedly contribute to a canceled meeting.

The Baltimore case points to another common feature of authenticity harm: once a manipulated image frames someone as racist, extremist, or otherwise hateful, the correction often has to work harder than the original post. Even when the deception is challenged, the reputational burden remains with the target.

The reported Trump image sits somewhat differently. It was not described as a hoax aimed at tricking audiences into believing a real photograph existed independently of the post. It was shared openly as AI-generated imagery in a high-stakes political context. Even so, the case still fits the broader trust question, because synthetic visuals used by powerful public figures can influence tone, interpretation, and public understanding even when their artificial nature is not entirely hidden.

Why “just look closely” is not a serious answer

The broader academic and institutional support provided for this draft is strong on one point: human judgment alone is not a dependable control for deepfakes and synthetic media.

That matters here because several incidents involved images that circulated in emotionally charged or time-sensitive settings. In those moments, people do not carefully inspect artifacts, backgrounds, lettering, or anatomical details. They react to context, timing, and implied authority.

The New Zealand case is a good example. Reporting noted an apparent AI marker in the image, including a misspelling on an ambulance. But that kind of clue is inconsistent and easy to miss. More importantly, by the time someone notices it, the image may already have spread and done harm.

The practical implication is simple: verification cannot depend on visual intuition alone. If an image carries operational, political, legal, or reputational weight, it needs context checks around it.

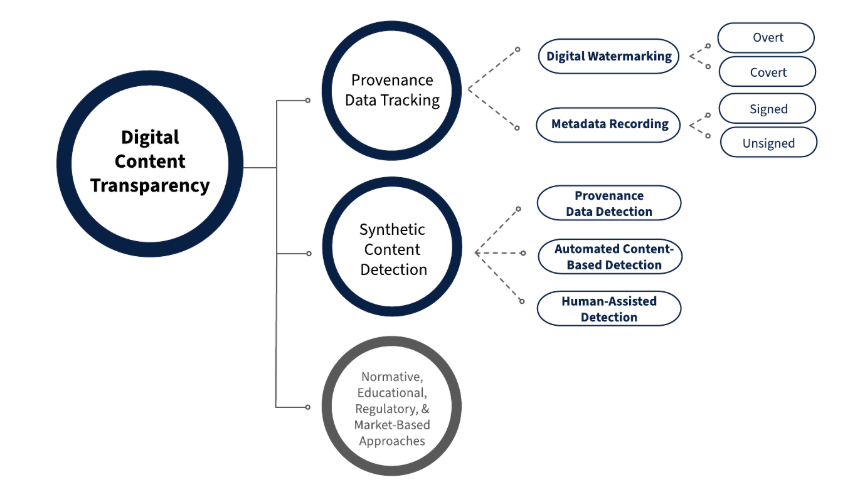

Source: NIST AI 100-4, Fig. 2, 2024. Adapted with attribution.

Source: NIST AI 100-4, Fig. 2, 2024. Adapted with attribution.

What organizations and public institutions can take from this sample

The supplied reference material supports a cautious but clear management point: synthetic-image risk is best treated as a trust and decision-quality problem, not only as a media-literacy problem.

In plain terms, that means the key question is often not “Can our team spot a fake image?” It is “What do we do before acting on a consequential image?”

In this sample of incidents we’ve reviewed here at Realz, a few practical disciplines stand out:

1. Verify the source, not just the image

If a visual appears to show an official, officer, crime scene, or emergency event, the first check should be whether the source is authoritative and whether the event is corroborated elsewhere.

2. Treat high-emotion visuals as higher-risk inputs

Images tied to violence, immigration enforcement, political conflict, or public danger can trigger fast sharing and fast judgment. That makes them especially important to pause and verify.

3. Build response paths for authenticity disputes

If a false image begins circulating, institutions need a way to respond quickly through official channels. The Moab police response is notable because authorities publicly addressed the image directly rather than leaving the false claim unanswered.

4. Separate detection from certainty

Detection tools and visual clues can help, but they are supporting signals, not final proof. Provenance, source confirmation, and contextual verification still matter.

5. Assume the burden of verification is rising

Even in this limited sample, fake or synthetic images appeared across local politics, public safety, diplomacy, and community tragedy. That does not mean every image is suspect. It means more images now deserve process-based verification before they are treated as evidence.

The trust problem is becoming more ordinary

One striking feature of this small set of reported cases is how ordinary many of the settings are.

These were not only celebrity hoaxes or elaborate intelligence operations. They involved a school, a local crime scene, a municipal animal search, a regional political dispute, a local councilmember, and a status-seeking false photo around a public event. That ordinariness is part of the story.

Synthetic-image risk is no longer confined to rare edge cases. In the incidents reviewed here, it appears in the everyday places where people make fast judgments about what is happening, who was present, and what an image proves.

That does not justify panic, and this sample is far too small to support grand conclusions. But it does support one sober takeaway: visual trust is becoming an operational issue.

When a fake image is persuasive enough to shape reaction, delay a response, damage a reputation, or distort a negotiation, the incident is no longer just about media manipulation. It is about the systems that decide what to believe next.