Article

In this sample of incidents we’ve reviewed here at Realz, the geography is limited and the timeframe is short: three distinct incident clusters, covered across several reports, spanning India, Italy, and Saudi Arabia around 5-6 May 2026. That makes this a narrow editorial snapshot, not a comprehensive survey. Still, even in a small set like this, a few useful patterns stand out.

The first is that image manipulation is not only a problem of embarrassment or internet confusion. It is increasingly a trust problem. The incident clusters reviewed here show synthetic or manipulated visuals being used to support false claims about public rules, misrepresent victims of a real-world tragedy, and circulate non-consensual imagery of a sitting prime minister.

The second is that the harm often comes from the surrounding claim as much as the image itself. A fabricated sign can be used to suggest a policy change that never happened. A synthetic portrait can be attached to a real disaster and presented as documentary evidence. A fake intimate image can be framed as political attack, harassment, or reputational damage, even when the target can publicly deny it.

That is why these incidents are better understood as authenticity harms: they increase uncertainty around what is real, raise the burden of verification, and exploit the speed of social sharing.

Two different uses of fake imagery

In the incidents we’ve looked at here at Realz, the cases fall into two broad categories.

1. Synthetic images used to strengthen a false narrative

One reported case involved social posts claiming Saudi authorities had banned photography and videography at Hajj sites. The claim was false, and the accompanying image also appeared to be AI-generated. Reporting cited visible inconsistencies, including a Gemini watermark and text in Urdu rather than Arabic. In this case, the image was not the whole story by itself. It functioned as false evidence for a false policy claim.

A separate case from India followed a similar pattern, but in a much more emotionally charged context. An AI-generated image was shared as if it showed a mother and son who had died in the Jabalpur boat tragedy. Reporting said reverse-image checks found no credible source for the image, an AI-detection tool judged it highly likely to be synthetic, and the Jabalpur collector clarified that it was unrelated to the incident. Here again, the image appears to have been used to give false emotional and documentary weight to a real event.

These two cases are different in context, but they point to the same practical issue: fake images do not need to be technically extraordinary to be effective. They only need to arrive at the right moment, attached to a claim people are already inclined to believe or share.

2. Non-consensual and deceptive imagery aimed at a public figure

Most of the reporting in this set concerns Italian Prime Minister Giorgia Meloni, who publicly denounced the circulation of AI-generated images depicting her in lingerie. Across multiple reports, the core facts are consistent: Meloni said the images were fake, described them as a political attack, warned that deepfakes can deceive and manipulate, and urged people to verify content before sharing it.

Some reports framed the episode primarily as political impersonation, while others also highlighted reputational harm and the broader issue of non-consensual synthetic imagery. One report also referred to an ongoing libel case tied to earlier deepfake pornographic images using her likeness.

Because these accounts are based on reported coverage rather than primary forensic disclosure, it is sensible to stay cautious about technical specifics beyond what the reporting supports. But the broader authenticity issue is clear enough: a public figure’s likeness was reportedly used without consent in imagery designed to look real enough to circulate as genuine.

Meloni’s own response is notable not because public figures are unusual targets, but because she explicitly pointed to an asymmetry that matters. She can answer publicly. Many others cannot. In that sense, this is not only a story about one politician. It is also a reminder that the same methods can be turned on people with far less reach, protection, or institutional support.

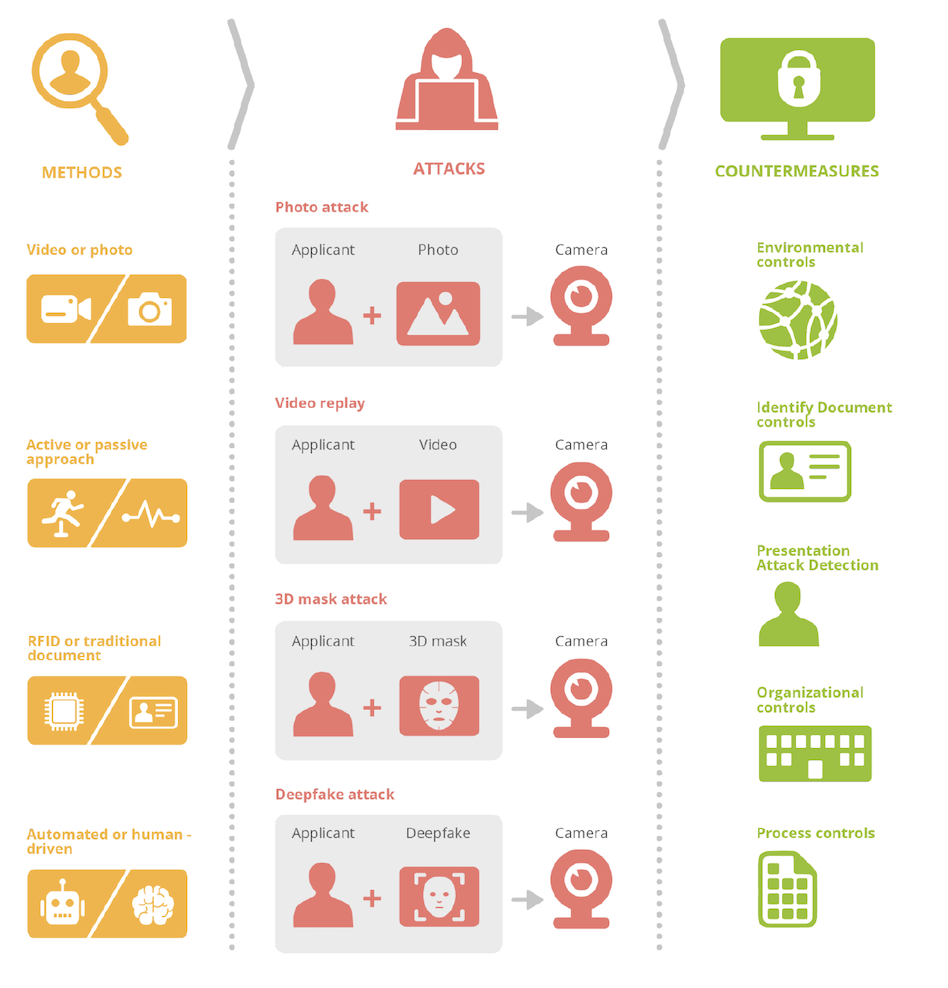

Source: ENISA, Remote Identity Proofing - Attacks & Countermeasures, 2022. Reused with attribution.

Source: ENISA, Remote Identity Proofing - Attacks & Countermeasures, 2022. Reused with attribution.

We should perhaps as a society implement better control measures in order to faster identify “attacks” like this, and make sure that images do not end up in reporting or circulation for longer periods of time.

What this small sample suggests

This small set of incident clusters supports a narrower conclusion more than a sweeping one.

It suggests that AI-generated imagery is already being used across very different contexts:

- to back false public-information claims,

- to misrepresent real victims and events,

- and to target a recognizable individual with deceptive or non-consensual visual content.

What unites those uses is not a single motive. In the cases reviewed here, the apparent goals range from misinformation to reputational damage to political attack. But the operational pattern is similar: an image is used as a trust shortcut.

People tend to treat visuals as evidence, especially when the image appears to confirm an emotionally loaded claim. That is exactly why verification matters so much. And it is also why a manipulated image can do damage even after it is debunked: it consumes attention, spreads quickly, and forces officials, journalists, platforms, or targets to spend time disproving it.

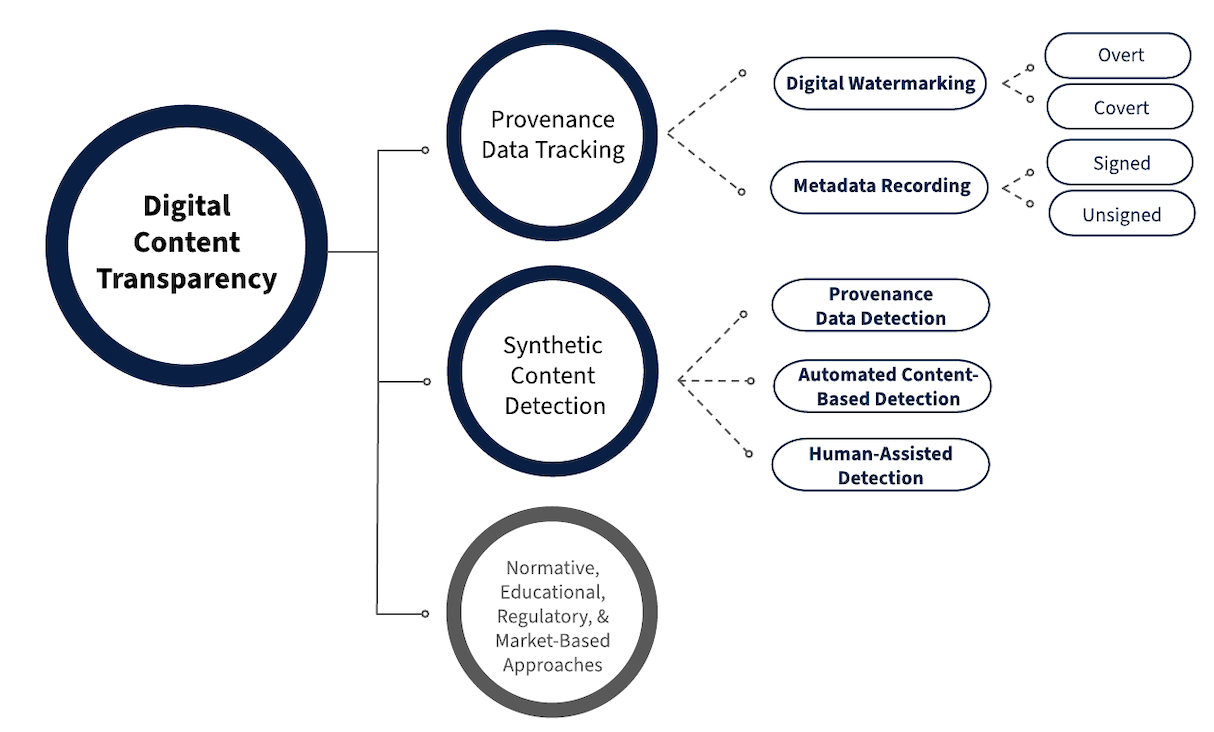

The background material supplied for this draft supports that framing. NIST’s work on synthetic content transparency treats provenance, authentication, labeling, and detection as complementary rather than interchangeable. In practical terms, that means there is no single signal that solves the problem. Detection tools may help. Provenance may help. Official clarification may help. But none of those removes the need to verify the wider claim attached to the image.

That point is especially relevant to the Jabalpur case. The reporting referenced an AI-detection score, but the stronger editorial lesson is not “the tool proved it.” It is that verification came from several layers together: lack of credible sourcing, tool-based assessment, and direct clarification from the local authority.

Source: NIST AI 100-4, Fig. 2, 2024. Adapted with attribution.

Source: NIST AI 100-4, Fig. 2, 2024. Adapted with attribution.

The real issue is decision quality

In visual-authenticity incidents, the most important question is often not whether an image was impressively made. It is what the image was trying to make people do.

In this sample, the intended effect appears to include:

- believing a policy that did not exist,

- accepting a fake image as evidence tied to a real tragedy,

- or reacting to an invented intimate image as if it were authentic.

That is why these incidents matter beyond media literacy alone. They affect decision quality: what citizens believe, what audiences share, what public figures must respond to, and what institutions need to correct under pressure.

For newsrooms, public agencies, and communications teams, this increases the cost of routine verification. For individuals, it means that seeing an image is no longer enough reason to treat it as documentary proof. And for leaders, the challenge is not to declare that everything is fake. It is to make sure important decisions do not depend on unverified visuals moving at social-media speed.

A practical takeaway from the cases reviewed here

The available material supports a modest but useful takeaway: images now need to be handled more like claims than like proof.

In the incidents reviewed here, the most reliable responses were not based on visual intuition alone. They depended on checking source context, comparing the claim against official information, using supporting technical analysis where available, and seeking direct clarification from an authoritative party.

That is a more realistic habit than asking people to simply “look more carefully.” The background research supplied for this draft also supports caution on that point: human judgment is important, but not dependable as a stand-alone deepfake control.

So while this is only a small sample, it points to a clear editorial conclusion. The problem with fake images is not just that they exist. It is that they can be inserted into already sensitive moments - religion, tragedy, politics - where people are likely to react first and verify later.

And once that becomes normal, the burden of trust shifts onto everyone else.